Abstract

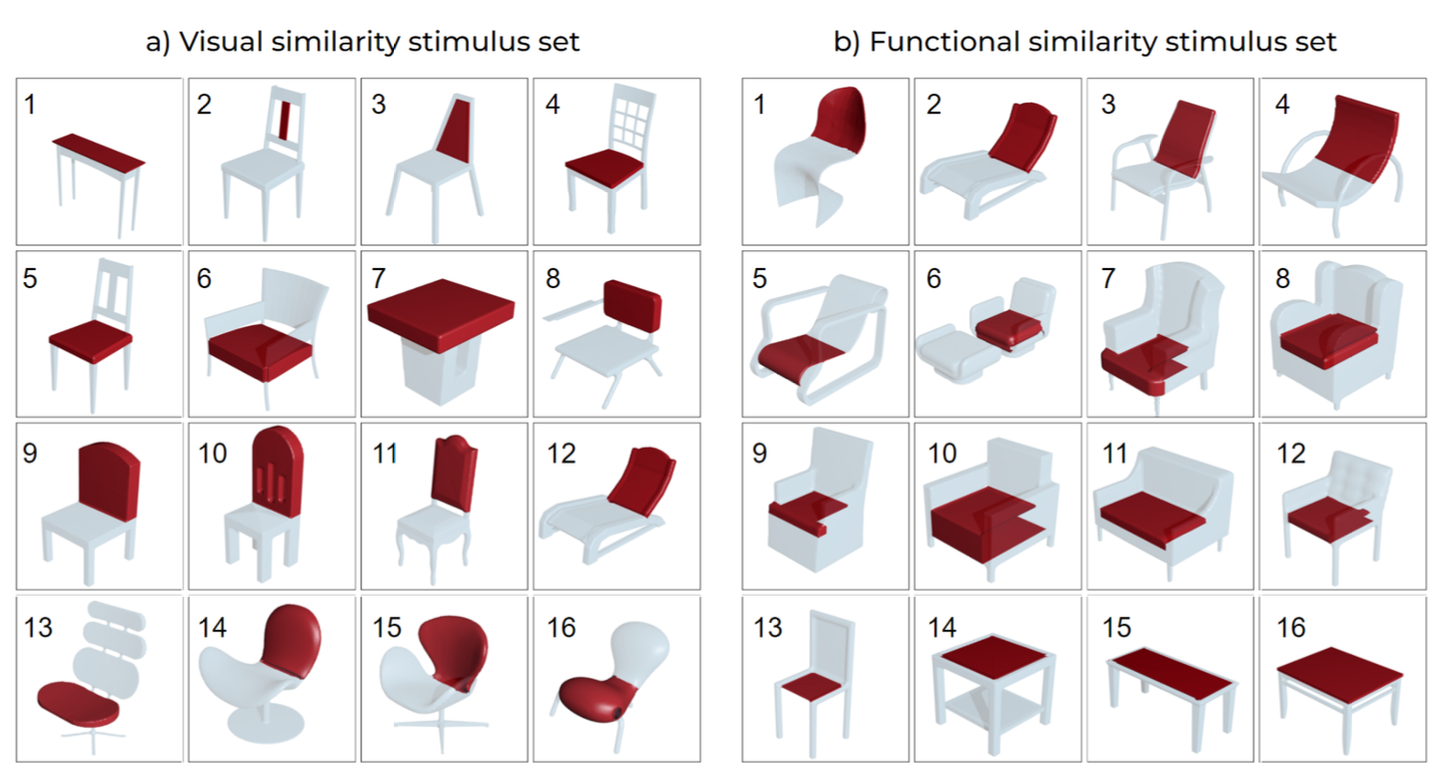

External sources of inspiration can promote the discovery of new ideas as designers ideate on a design task. Data-driven techniques can increasingly enable the retrieval of inspirational stimuli based on non-text-based representations, beyond semantic features of stimuli. However, there is a lack of fundamental understanding regarding how humans evaluate similarity between non-semantic design stimuli (e.g., visual). Toward this aim, this work examines human-evaluated and computationally derived representations of visual and functional similarities of 3D-model parts. A study was conducted where participants (n=36) assessed triplet ratings of parts and categorized these parts into groups. Similarity is defined by distances within embedding spaces constructed using triplet ratings and deep-learning methods, representing human and computational representations. Distances between stimuli that are grouped together (or not) are determined to understand how various methods and criteria used to define non-text-based similarity align with perceptions of ‘near’ and ‘far’. Distinct boundaries in computed distances separating stimuli that are ‘too far’ were observed, which include farther stimuli when modeling visual vs. functional attributes.